Often time the business needs are such that the security restrictions of users change periodically due to modification of job roles, work location and/or other attributes. A solution that can accommodate addition, update and removal of users seamlessly or dynamically in SSAS is critical to reducing latency and minimizing user security maintenance. The idea of using SSAS stored procedure to enforce dimension security dynamically is rather straightforward: While all users belong to a single SSAS database role, individually, their data access is restricted based on the set of dimension members they have permission to as determined by the SSAS stored procedure dynamically.

A short time ago my team identified a requirement to refine our overall SSAS security scheme. The data available in our cubes needed to be filtered to authorized users by their sales location(s). The list of users and their authorized sales locations are maintained in a collection of M:N relational tables in one of our SQL Server databases. Our ETL refreshes these tables regularly. For simplicity, a database view is used to de-normalize the data in these tables into "user alias-to-sales location" pairs as shown in the mock-up here.

| UserAlias | SalesLocation |

| usera | United States |

| usera | Canada |

| userb | United States |

| userc | Hong Kong |

| userd | United States |

| userd | Germany |

| userd | UK |

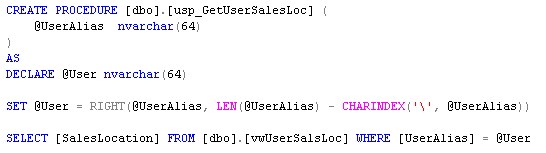

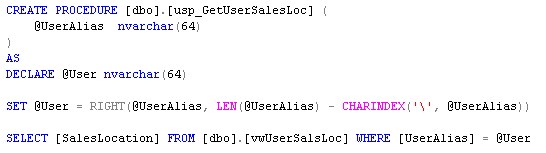

To avoid the potential of SQL injection attacks, a SQL Server stored procedure is used to look up the data in the view. The stored procedure (listed below) takes the alias of the current user as an argument and returns the corresponding sales locations. The domain definition is stripped off for our purpose.

Our SSAS stored procedure (shown below) is implemented as a method from the UserSecurity C# class library - GetFilteredSet_SalesLocation(). The SSAS stored procedure employs the [usp_GetUserSalesLoc] SQL Server stored procedure to retrieve and return the unique name of the sales locations.

The GetFilteredSet_SalesLocation() method takes three string-type arguments: user alias of the current user, name of the SQL Server where [usp_GetUserSalesLoc] is located (name of the database is hard-coded in the method - yes I know), and the dimension hierarchy level. The dimension hierarchy level specification allows us to uniformly leverage the SSAS stored procedure across multiple SSAS databases and cubes with similar security filtering requirement but different dimensional schemas. The method then enumerates through the data set returned by [usp_GetUserSalesLoc] and assembles the MDX set as an output string as such:

{[Dimension].[Hierarchy].[Level].&[MemberName], [Dimension].[Hierarchy].[Level].&[MemberName], [Dimension].[Hierarchy].[Level].&[MemberName], ...}

Subsequently the SSAS stored procedure is compiled into a .NET assembly (SSASSecurity.dll) and deployed to the SSAS server. I usually place the DLL in Drive:\Program Files\Microsoft SQL Server\MSSQL.#\OLAP\bin\ directory. In this particular instance, I had the assembly registered within the server scope so that it can be used by any of the databases on the SSAS server. It can certainly be registered within the database scope.

Note that the assembly must be registered with "External access" permission at the minimum in order for the GetFilteredSet_SalesLocation() method to function properly. It also needs to run under an account that has permission to execute the [usp_GetUserSalesLoc] SQL Server stored procedure wherever it might be located.

Finally we specified one SSAS database role with all user logins added to the membership collection. The user members have read access to the cubes and dimensions. For the dimension hierarchy of interest, we placed a call to the GetFilteredSet_SalesLocation() SSAS stored procedure in the MDX expression of the Allowed member set. The STRTOSET MDX function converts the output string into a valid MDX set. The USERNAME MDX function returns the login alias of the current user.

The allowed member set can be defined for either database or cube dimension. If a dimension is referenced by a number of cubes in the database, it might be desirable to define the allowed member set at database-level and propagate the filter to cube-level through inheritance. By enabling the "Visual Total" option, we excluded the denied members from contributing to the aggregate values.

This is it. Users are now restricted to cube data for those sales locations they are authorized to see. This security scheme works nicely with Excel when the workbook data connections are specified with integrated security.

There are few approaches to implement dynamic dimension security in SSAS - as you will find in number of writings both online and offline. I found the SSAS stored procedure approach to be the most straightforward of them all and the least pervasive to implement. Furthermore security restrictions are much more immediate because they are queried directly from the relational database each time. However, we do need to ensure the SQL Server and the database where the [usp_GetUserSalesLoc] stored procedure resides is up and running at all time..

.png)

.png) I recently sat through the 70-445 and 70-446 exams for MCTS (SQL Server 2005 BI) and MCITP (BI Developer) respectively. I took them about a week-and-a-half from each other. Even though I passed them both on my first try which I am very please, I did plan on using the free second chance promotion vouchers from Microsoft just in case.

I recently sat through the 70-445 and 70-446 exams for MCTS (SQL Server 2005 BI) and MCITP (BI Developer) respectively. I took them about a week-and-a-half from each other. Even though I passed them both on my first try which I am very please, I did plan on using the free second chance promotion vouchers from Microsoft just in case.

.png)

.png)